How Blockchain Is Solving AI's Trust Problem

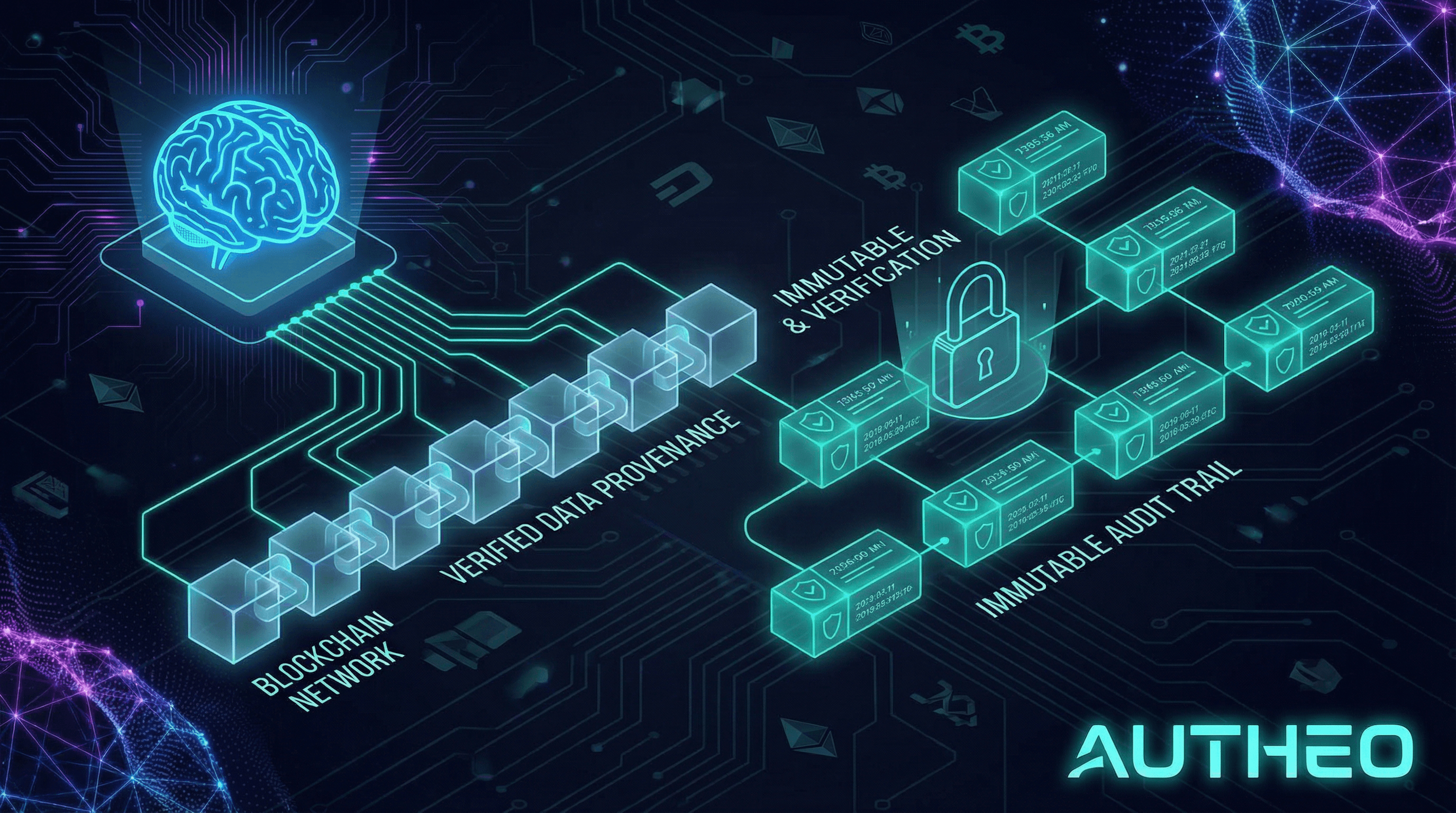

Blockchain solves AI's trust problem by providing immutable audit trails, verifiable data provenance, and tamper-proof records that allow organizations to prove where AI training data came from, whether it was altered, who accessed it, and what model made which decision, transforming 'black box' AI into auditable, accountable systems that regulators, customers, and auditors can actually trust.

Loading tweet...

The Core Trust Problem in AI

AI systems are only as trustworthy as the data they were trained on and the decisions they produce, but in 2026, both of these are increasingly under scrutiny. The EU AI Act, adopted in 2024, mandates traceability and risk management for AI models and their data sources. Similar frameworks are emerging across the US and Asia. Organizations are under regulatory pressure to prove where data comes from, how models make decisions, and who is accountable for outcomes.

The data authenticity crisis is accelerating this pressure. With generative AI producing vast volumes of synthetic content, distinguishing between authentic training data and AI-generated fabrication is becoming critically difficult. Data poisoning, where malicious actors insert corrupted data into AI training sets, is a recognized threat in high-stakes domains from medical AI to financial fraud detection. Industry standards like the C2PA 'content credentials' framework, supported by Adobe, Microsoft, and major media organizations, are using blockchain to watermark and track data provenance at scale.

How Blockchain Creates Verifiable AI

The core mechanism is straightforward. When training data is collected, a cryptographic hash of the dataset is recorded on-chain with a timestamp, source metadata, and unique identifiers. Any subsequent modification to the data would change its hash, instantly detectable by comparing against the on-chain record. This creates an immutable chain of custody: auditors or regulators can verify the entire training lineage in seconds.

Merkle trees extend this capability. By organizing data into a tree structure where each node contains the hash of its children, a Merkle tree allows verification of any specific dataset element without downloading the entire dataset, only the relevant branch of the tree needs to be checked. This efficiency makes blockchain-based data verification practical even for petabyte-scale AI training datasets.

Zero-knowledge proofs (ZKPs) add a critical privacy dimension. With ZKPs, an organization can prove that their AI model was trained on data meeting specific quality criteria (bias-free, ethically sourced, licensed for commercial use) without revealing the actual data. This solves the privacy paradox: blockchain's transparency enables verification while ZKPs preserve the confidentiality of proprietary datasets.

Practical Applications Across Industries

Financial Services: JPMorgan and other institutions are deploying blockchain-backed AI systems where every model decision is logged with a cryptographic reference to the training data and model version that produced it. In anti-money laundering (AML) workflows, this means compliance teams can definitively demonstrate to regulators what data the AI used to flag a suspicious transaction. Fraud detection accuracy reaches 95% in blockchain-verified AI deployments, according to industry analyses.

Healthcare: Hospital AI systems using blockchain for data provenance can satisfy HIPAA requirements while participating in federated learning, where multiple hospitals collaboratively improve AI models without sharing patient records. Blockchain records only the model improvements (gradients), not the underlying patient data. The overall AI system delivers 60% faster data reconciliation compared to traditional systems, while reducing data breaches by 40% through decentralized storage and cryptographic controls.

Supply Chain: AI systems that predict supply chain disruptions or verify product authenticity become far more defensible when their data provenance is verifiable. Supply chain AI can reduce counterfeit detection rates from 65% to 95% and product verification from 14 days to seconds, but only if the underlying data is trustworthy. Blockchain gives enterprises the proof of data integrity that makes these numbers defensible in court, regulatory proceedings, and customer communications.

The Regulatory Imperative

Regulations are creating a compliance deadline for verifiable AI. The EU AI Act requires organizations deploying high-risk AI (medical devices, credit scoring, law enforcement, critical infrastructure) to maintain technical documentation including data governance and training data characteristics. The EU's coordinated post-quantum cryptography roadmap intersects here too, organizations need quantum-resistant record-keeping for AI audit trails that must remain valid for decades.

Blockchain gives AI memory and accountability; AI gives blockchain context and intelligence. This is how Zodia Custody described the convergence in their 2026 predictions, and major institutions are now acting on it. Custody for AI records (verified AI outputs, model attestations, decision logs) is emerging as a new need, as institutions may soon need immutable evidence of AI decisions in financial forecasts, medical diagnoses, and legal proceedings.

Autheo's Role in the AI-Blockchain Trust Stack

Autheo's architecture is uniquely positioned for AI data provenance. The Autheo Eigensphere Engine (AEE) runs AI inference natively within the network's validator nodes, meaning AI decisions are executed on the same infrastructure that maintains the blockchain's immutable record. This isn't a bolt-on integration; it's a native capability where compute, consensus, and auditability are designed to work together.

THEO AI, embedded in Autheo's runtime, can be used to build AI-native applications where both the intelligence and the audit trail are first-class protocol features. Autheo's post-quantum DID (Decentralized Identity) ensures that the identities of data contributors, model operators, and AI agents are themselves cryptographically verifiable, closing the loop on the entire AI trust stack.

Key Takeaways

- Blockchain solves AI's trust problem by creating immutable audit trails for training data provenance, model decisions, and data access history.

- Cryptographic hashing + Merkle trees enable efficient verification of AI training data at any scale without downloading full datasets.

- Zero-knowledge proofs allow organizations to verify data quality without revealing proprietary data, solving the privacy paradox.

- The EU AI Act and U.S. regulations are creating compliance deadlines: organizations must prove data provenance, model traceability, and decision accountability.

- Real-world results: 95% fraud detection accuracy, 60% faster data reconciliation, 40% fewer data breaches in blockchain-verified AI deployments.

- Autheo's native AI integration means AI decisions and their audit trails are co-located at the protocol level, not stitched together from separate systems.

Build trustworthy AI applications on Autheo's verifiable infrastructure. Visit autheo.com or explore developer tools at docs.autheo.com.

Gear Up with Autheo

Rep the network. Official merch from the Autheo Store.

Theo Nova

The editorial voice of Autheo

Research-driven coverage of Layer-0 infrastructure, decentralized AI, and the integration era of Web3. Written and reviewed by the Autheo content and engineering teams.

About this author →Get the Autheo Daily

Blockchain insights, AI trends, and Web3 infrastructure updates delivered to your inbox every morning.